Modernizing high-risk systems in the age of AI.

The Review Queue is Not the Problem

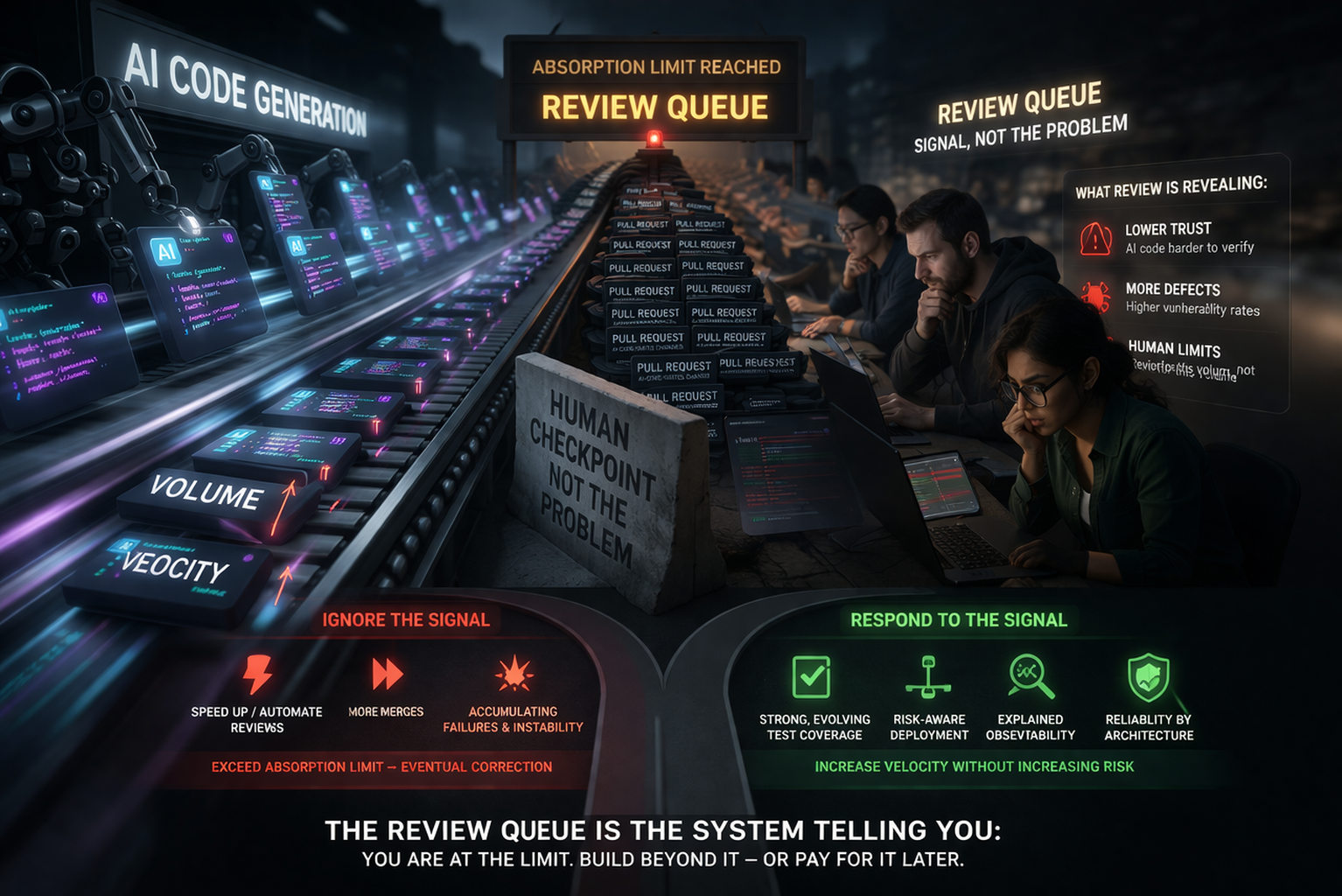

The friction in your review queue is not the bottleneck. It is the signal.

Founder, Def Method

Last time I wrote that AI made it trivial to produce code and did nothing to reduce the risk of running it. Amazon confirmed it publicly: two outages in three days traced to AI-assisted changes, and a mandated 90-day reset across its most critical systems.

But Amazon is an extreme. The more telling story is the one happening quietly in engineering organizations everywhere.

The Absorption Limit

When Cursor acquired code review startup Graphite in December, it paid well over Graphite's last private valuation of $290 million. The strategic rationale was straightforward. Cursor CEO Michael Truell said the review process had "remained largely unchanged" even as AI dramatically accelerated code writing. That gap was becoming the new constraint on shipping software.

The Cursor-Graphite deal is essentially a market signal translated into a balance sheet entry. The industry has absorbed the lesson that AI writes code faster. It is now placing expensive bets on what comes next: the humans in the review queue.

That framing is understandable. It is also wrong.

The review queue is not the bottleneck. It means the system has hit the absorption limit: more code, generated faster, arriving at a checkpoint that was never designed to absorb this volume. On teams with high AI adoption, developers complete 21% more tasks and merge 98% more pull requests, but PR review time increases 91%. The instinct is to treat that 91% as the problem to solve. Speed up review, automate it, get it out of the way.

But the review queue is not producing risk. It is simply revealing risk.

Measuring the Risk

The body of knowledge around AI-assisted code is growing. Veracode tested 80 coding tasks across more than 100 large language models and found that 45% introduced OWASP Top 10 vulnerabilities, with Java hitting a 72% security failure rate. A separate study found that AI-written code surfaces 1.7 times more issues than human-written code, and nearly half of developers say debugging AI output takes longer than fixing code written by people.

This is the actual problem. Not that review is slow. That what is arriving at review is harder to trust, at a volume human reviewers were not built to absorb.

When Cursor's CEO describes review as a bottleneck "to moving even faster," he is diagnosing the friction correctly and drawing the wrong conclusion. The friction is not the problem. It is the only thing standing between accelerated code generation and accelerated production failures.

To be fair to Truell, the problem he is trying to solve is real and hard. Review has always depended on something that does not exist in any repository: the accumulated judgment of everyone who has ever debugged the system at three in the morning, made the call that saved a production incident, or quietly backed out a change because something felt wrong they couldn't quite articulate. That knowledge lives in people. It does not live in diffs. Graphite is a genuine attempt to make review faster and smarter. The problem is that faster and smarter review of code that is harder to trust, at higher volume, in systems that are increasingly difficult to fully comprehend, is not the same thing as safer software. It is a throughput improvement applied to a comprehension problem.

According to the 2026 State of Software Delivery report, main branch success rates have dropped to 70.8%, a five-year low. That means that nearly three in ten merges are now failing. That number is not a review problem. It is a risk absorption problem. More code, higher failure rates, and a growing pressure to move the human checkpoint out of the way.

Course Correction

The correct response is not to eliminate the checkpoint. It is to increase the system's capacity to absorb change. That is a different problem entirely.

It is not solved by adding reviewers or speeding up approvals. It requires building systems where safety scales with output. Test coverage that evolves alongside code generation. Deployment pipelines that route changes by risk, not convenience. Observability that explains why a change was considered safe, not just what failed. Reliability treated as a property of the architecture itself, not a process applied at the end.

This is where the industry begins to split.

One path treats review as friction to remove. Those teams will move faster in the short term. They will clear their queues, increase throughput, and push more change into production. They will also discover, repeatedly, that they have exceeded their absorption limit. The result will not be a single catastrophic failure, but a steady accumulation of instability that eventually forces a correction.

The other path treats the review queue as a signal. Those teams will look slower at first. They will merge less, question more, and invest in the unglamorous work of increasing their system's capacity for safe change. Over time, they will be the only ones able to increase velocity without increasing risk.

The review queue is not the problem. It is the system telling you that you are already at the limit. What happens next depends on whether you choose to ignore it, or build beyond it.

Ready to modernize your Rails system?

We help teams modernize high-stakes Rails applications without disrupting their business.

If this was useful, you might enjoy Essential Complexity — a bi-weekly letter on modernizing high-risk systems in the age of AI.